Books / Sorting Algorithms in Computer Science / Merge Sort

Merge Sort

Overview of Merge Sort

Merge sort is one of the earliest sorting algorithms. The algorithm was developed by John von Neumann in 1945. It is an efficient algorithm which uses divide and conquer strategy to sort a list of elements. The basic idea is very simple: divide the array in half, sort each half separately, and then merge the sorted halves into a single array again. Of course each half is sorted using the merge sort algorithm, which is why it is recursive. And of course, like any recursive algorithm, it has a base case, which is simply that when the array to be sorted has just two elements, they are simply compared and swapped if necessary. The “merge” part of the algorithm does the real work; its job is to compare the elements in each half of the array, moving the smaller of the two it compares into the “output” array. Merge sorting is easiest when there is a spare array equal in size to the original array.

Merge Sort Algorithm

Basic idea:

- Divide the input array into two subarrays of roughly equal size.

- Recursively merge sort each of the subarrays.

- Merge the newly-sorted subarrays into a single sorted array.

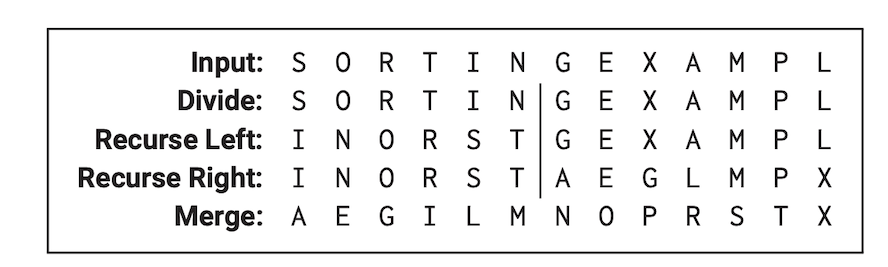

A mergesort example

The first step is completely trivial-just divide the array size by two-and we can delegate the second step to recursion. All the real work is done in the final merge step. To keep the recursive structure clear, I’ve extracted the merge step into an independent subroutine. The merge algorithm is also recursive-identify the first element of the output array, and then recursively merge the rest of the input arrays.

Note: The array index starts at 1 instead of 0 in the pseudo-code below.

MergeSort(A[1 .. n]):

if n > 1

m = n/2

MergeSort(A[1 .. m]) // Recurse

MergeSort(A[m + 1 .. n]) // Recurse

Merge(A[1 .. n], m)

The pseudo code for Merge() is given below:

Merge(A[1 .. n], m):

i = 1; j = m + 1

for k = 1 to n

if j > n

B[k] = A[i]; i = i + 1

else if i > m

B[k] = A[j]; j = j + 1

else if A[i] < A[j]

B[k] = A[i]; i = i + 1

else

B[k] = A[ j]; j = j + 1

for k = 1 to n

A[k] = B[k]

Merge() function correctly merges the subarrays A[1...m] and A[m+1...n], assuming those subarrays are sorted in the input.

Proof: Let A[1...n] be any array and m any integer such that the subarrays A[1...m] and A[m+1 . . n] are sorted. We prove that for all k from 0 to n, the last n-k-1 iterations of the main loop correctly merge A[i . . m]and A[j . . n] into B[k . . n]. The proof proceeds by induction on n-k+1, the number of elements remaining to be merged.

If k>n, the algorithm correctly merges the two empty subarrays by doing absolutely nothing. (This is the base case of the inductive proof.) Otherwise, there are four cases to consider for the k th iteration of the main loop.

- If

j>n, then subarrayA[j . . n]is empty, \(\operatorname{so~} \min (A[i . . m] \cup A[j . . n])=A[i]\). - If

i>m, then subarrayA[i . . m]is empty, \(\operatorname{so~} \min (A[i . . m] \cup A[j . . n])=A[j]\). - Otherwise, if

A[i]<A[j], then \(\min (A[i . . m] \cup A[j . . n])=A[i]\). - Otherwise, we must have \(A[i] \geq A[j]\), and \(\min (A[i . . m] \cup A[j \ldots n])=A[j]\). In all four cases, \(B[k]\) is correctly assigned the smallest element of

A[i . . m]\(\cup\)A[j . . n]. In the two cases with the assignment \(B[k] \leftarrow A[i]\), recursion correctly merges. In other words, the Induction Hypothesis implies that the lastn-kiterations of the main loop correctly mergeA[i+1 . . m]andA[j . . n]intoB[k+1...n]. Similarly, in the other two cases, recursion also correctly merges the rest of the subarrays.

MergeSort() correctly sorts any input array A[1...n].

Proof: We prove the theorem by induction on n. If n <= 1, the algorithm correctly does nothing. The induction hypothesis implies that our algorithm correctly sorts the two smaller subarrays A[1 . . m] and A[m+1 . . n], after which they are correctly merged into a single sorted array.

Analysis of Merge sort (Time and Space Complexity)

- Worst-case performance: O(N Log N)

- Worst-case space complexity: O(N)

More details below.

Because the merges algorithm is recursive, its running time is naturally expressed as a recurrence. MERGE clearly takes O(n) time, because it’s a simple for-loop with constant work per iteration. We immediately obtain the following recurrence for MERGESORT:

\[T(n)=T(\lceil n / 2\rceil)+T(\lfloor n / 2\rfloor)+O(n) .\]As in most divide-and-conquer recurrences, we can safely strip out the floors and ceilings (using a technique called domain transformations described later in this chapter), giving us the simpler recurrence \(T(n)=2 T(n / 2)+O(n)\). The “all levels equal” case of recursion tree method immediately implies the closed-form solution T(n)=O(n log n).

Common Merge Sort Questions

What is Merge Sort?

Merge Sort is a sorting algorithm that divides an array into two halves, sorts each half recursively, and then merges the sorted halves back together to produce a fully sorted array.

What is the time complexity of Merge Sort?

Merge Sort has a time complexity of O(n log n) in the worst case, best case, and average case. This makes it a very efficient sorting algorithm for large datasets.

What is the best case time complexity of Merge Sort?

The best case time complexity of Merge Sort is O(n log n). This occurs when the array is already sorted or nearly sorted, so the algorithm can quickly merge the subarrays together. In practice, however, it is difficult to achieve this best case scenario.

What is the space complexity of Merge Sort?

The space complexity of Merge Sort is O(n). This is because it creates temporary arrays during the sorting process, but these arrays are eventually merged back together to produce a fully sorted array. The amount of extra memory required is proportional to the size of the array being sorted.

Is merge sort in-place sorting algorithm?

No, merge sort does not qualify as an in-place sorting algorithm because it copies more than a constant number of elements at some time.

Is merge sort adaptive?

No. In adaptive algorithms, the complexity (running time, performance) changes based on whether or not the input array is sorted. They perform better when the input is sorted. Merge sort is not adaptive because it uses O(N log N) comparisons even when the input is already sorted.

Is merge sort stable?

Yes, majority of implementations are stable, that is the order of equal elements is the same in the input and output

Is merge sort divide and conquer?

Yes. A divide and conquer algorithm continuously breaks down a bigger problem into sub-problems, until they become simple enough to be solved directly. Merge sort is a divide and conquer algorithm.

Code Examples

Merge sort in Java

class sorting {

public static void merge(int[] left_arr,int[] right_arr, int[] arr,int left_size, int right_size){

int i=0,l=0,r = 0;

//The while loops check the conditions for merging

while(l<left_size && r<right_size){

if(left_arr[l]<right_arr[r]){

arr[i++] = left_arr[l++];

}

else{

arr[i++] = right_arr[r++];

}

}

while(l<left_size){

arr[i++] = left_arr[l++];

}

while(r<right_size){

arr[i++] = right_arr[r++];

}

}

public static void mergeSort(int [] arr, int len){

if (len < 2){return;}

int mid = len / 2;

int [] left_arr = new int[mid];

int [] right_arr = new int[len-mid];

//Dividing array into two and copying into two separate arrays

int k = 0;

for(int i = 0;i<len;++i){

if(i<mid){

left_arr[i] = arr[i];

}

else{

right_arr[k] = arr[i];

k = k+1;

}

}

// Recursively calling the function to divide the subarrays further

mergeSort(left_arr,mid);

mergeSort(right_arr,len-mid);

// Calling the merge method on each subdivision

merge(left_arr,right_arr,arr,mid,len-mid);

}

public static void main( String args[] ) {

int [] array = {12,1,10,50,5,15,45};

mergeSort(array,array.length);

for(int i =0; i< array.length;++i){

System.out.print(array[i]+ " ");

}

}

}

Let’s review how the merge method works:

-

The first while loop from lines 7 to 15 checks if the value of both the iterators is less than the respective array size, then it compares which of the element in right_arr or left_arr is smaller than the other and chooses that one to place in arr.

- The second while loop from lines 16 to 18 checks that if only elements in the left_arr are left then simply append them to arr

- The third while loop from lines 19 to 21 does the same but for the right_arr.

Merge sort in Python

def mergeSort(myList):

if len(myList) > 1:

mid = len(myList) // 2

left = myList[:mid]

right = myList[mid:]

# Recursive call on each half

mergeSort(left)

mergeSort(right)

# Two iterators for traversing the two halves

i = 0

j = 0

# Iterator for the main list

k = 0

while i < len(left) and j < len(right):

if left[i] <= right[j]:

# The value from the left half has been used

myList[k] = left[i]

# Move the iterator forward

i += 1

else:

myList[k] = right[j]

j += 1

# Move to the next slot

k += 1

# For all the remaining values

while i < len(left):

myList[k] = left[i]

i += 1

k += 1

while j < len(right):

myList[k]=right[j]

j += 1

k += 1

myList = [54,26,93,17,77,31,44,55,20]

mergeSort(myList)

print(myList)